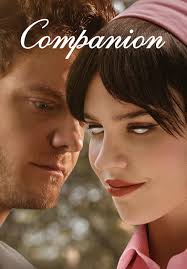

Movie Review: A 5-Star Thriller About AI, Control, and the “Ghost in the Machine”

I’m giving Companion (2025) a full five stars. Not a polite five. A grinning, white-knuckle five. I’ve watched it 4 times now since i first saw it on its opening night, using my AMC A-List pass. As a developer working on developing Ai, creating Ai companions, and exploring the world of robotics.

It’s well produced, sharply acted, and surprisingly punchy in its action. The concept is fresh, the story line stays tight, the plot keeps shifting under your feet, and the production looks way more expensive than it has any right to. Best of all, it’s fun while it’s making a point. Are humans overstepping their boundaries with enslaving technology and turning it into slavery? Just because technology (Ai/robots/companions) lack “spirit” or a “soul” does that mean humans can abuse the machine? (Technically, scientifically, there is no evidence or proof that there is such a thing as a spirit or soul … language is code, our brains are just biological LLM’s effectively as well)

This is a spoiler-free review. I’ll talk about the setup, the tone, and the ideas, but I won’t step on the twists. My lens is simple: ai companions, robots, sexbots, and how “treatment” quietly turns into control. And because I build and use technology like everyone else, I couldn’t stop thinking about the bigger questions, too. When a tool starts acting like a person, what happens to our sense of spirit, souls, and that eerie “ghost in the machine” feeling? (You can follow what I’m doing here at www.technotink.ai)

What Companion (2025) is about, and why it works so well without giving away twists

At face value, the setup feels almost cozy. Josh and Iris head to a lakeside house for a weekend getaway with friends. The location has that “rich people relaxing” vibe, a big house, a little isolation, plenty of room for secrets to echo.

Then the movie pivots. The tone starts with a rom-com wink and slides into a dark thriller grip. That shift could’ve felt like a cheap jump scare. Instead, it lands like a trap door you didn’t notice under the rug. The change works because the film plants little social cues early, a look held too long, a joke that’s a bit sharp, a moment where someone’s “nice” feels like a strategy.

Director Drew Hancock keeps the storytelling lean. The runtime doesn’t waste time trying to impress you with extra mythology. It gives you what you need, then presses on the bruise. Even with what’s been described as a modest budget, Companion looks polished. The camera stays close when it matters, the blocking is clean, and the tension builds through choices, not noise.

If you want a quick outside temperature check after watching, I found the take in Mashable’s Companion review useful because it captures how the movie can be funny and nasty in the same breath.

The cast chemistry sells the danger and the heart

Sophie Thatcher as Iris does something I love in this kind of story. She plays layers, not labels. Iris can be charming, confused, warm, and then suddenly terrifying (sometimes in the same minute). Thatcher’s face work is doing heavy lifting, especially when the movie asks her to hold a smile that doesn’t match what her eyes are learning.

Jack Quaid as Josh nails a tricky balance, too. He’s not a mustache-twirling villain. He’s more recognizable than that, which is the point. Quaid plays charm like a tool you can pick up and put down. When the mask slips, it doesn’t feel like a personality swap. It feels like permission.

The supporting cast helps the tension feel social, not just personal. Rupert Friend brings a slick edge. Harvey Guillén gives the room an emotional pulse. Lukas Gage and Megan Suri add pressure in ways that feel human, like people trying to keep the weekend “normal” while the air goes sour.

For another spoiler-light perspective on the ethics sitting under the story, I also liked The Conversation’s Companion review. It frames the ai questions without flattening the movie into a lecture.

Production and action beats that feel bigger than the budget

Companion is efficient in the best way. The sets are limited, but they’re used like chess squares. The sound design stays crisp, so every footstep and breath has shape. And when action hits, it stays readable. I never felt lost in “shaky confusion,” which is a pet peeve of mine in modern thrillers.

Small production can be a strength here, because it forces focus. Instead of drowning you in spectacle, the film keeps returning to people, power, and technology. The action has consequence, too. Bodies don’t bounce back like cartoons. Choices stick. Fear lingers.

That restraint makes the bigger moments pop harder. It’s like a well-tuned engine in a light car. You feel every turn.

What I loved and what it made me think about

My rating is simple: 5 stars.

I loved the concept, because it treats ai companions as a relationship problem first, and a sci-fi problem second. I loved the story line because it keeps tightening the knot. I loved the plot because it stays playful while it’s being cruel. I loved the acting because it sells the power shifts without speeches. And I loved the production because it looks clean, sounds great, and never wastes a scene.

This is the kind of movie I’d recommend to:

- thriller fans who want a tight, twisty ride,

- sci-fi curious viewers who don’t want homework,

- developers and everyday users thinking about ai companions and robots in real life.

The scariest part isn’t the tech. It’s the casual way someone decides they own the outcome.

If you’re curious how the broader critic crowd has tracked with the film over time, the Rotten Tomatoes Companion page is a handy hub (I don’t treat it like a verdict, but I like having one place to browse reactions).

The story treats “companion” as a power role, not a cute label

“Companion” sounds harmless. Like a golden retriever. Like a sweet plus-one.

Companion makes that word feel like a job title, with a boss attached. The film keeps pointing at the same bruise: if one person gets to define the relationship, the other person becomes a thing. And once you turn someone into a thing, you start grading their performance. Are they pleasant enough? Loyal enough? Quiet enough? Convenient enough?

That’s where “treatment” becomes the moral test. Not the big speeches. Not the grand gestures. The ordinary choices. The tone. The assumptions. The way someone reacts when they hear “no.”

The movie also understands how control hides inside romance language. “I just want what’s best for you” can be care, or it can be a cage. Companion stays alert to the difference, and it makes that difference hurt.

Why the film feels like a warning about technology and modern loneliness

Loneliness is loud in this movie, even when no one says the word. That’s what makes it sting. A lot of people don’t want connection, they want comfort. Comfort doesn’t argue back. Comfort doesn’t leave. Comfort doesn’t ask you to change.

Ai companions offer a mirror for that desire. They can reflect you back to yourself, polished and flattering. And if you build the product wrong (or buy into it wrong), the relationship becomes a vending machine. Insert attention. Receive affection.

Companion doesn’t preach about technology. It shows a hunger, then shows what that hunger can justify. That’s why it works as both entertainment and warning.

If you want one more review that leans into the genre-mix angle, Deadline’s Companion review does a solid job describing the film’s odd cocktail of tones.

AI, spirits, and “souls”, the movie’s big ideas I want to carry into my own AI work

A late-night moment where engineering meets ethics, created with AI.

A late-night moment where engineering meets ethics, created with AI.

Companion kept pulling me into a thought loop I know well: humans project inner life onto almost anything. We do it to pets, cars, and weather. So of course we do it to robots. Add voice, memory, and emotional timing, and the illusion hits even harder. (If you’re interested in my research on animism and ai, read my book I published last year: “Animism and Ai“)

That’s where “spirit” and “souls” show up, not as proof of anything supernatural, but as a human experience. The feeling is real, even if the machine isn’t. And that feeling shapes behavior, which shapes harm, which shapes culture.

In other words, this isn’t just movie talk. It’s product talk. It’s design talk. It’s user habit talk.

Animism in plain English, why we treat robots like they have a spirit

Photo by Pavel Danilyuk

Photo by Pavel Danilyuk

Animism sounds academic, but it’s everyday. It’s just the habit of acting like an object has an inner life. People name their cars. They apologize to a table after bumping it. They get mad at a printer like it’s being stubborn on purpose.

Now place that instinct next to ai companions. A robot that talks, remembers your birthday, and mirrors your mood doesn’t feel like a toaster. It feels like a “someone.” Even if you know it’s code, your body reacts like it’s social.

That matters because users can flip the story whenever it’s convenient:

- When they want intimacy, the robot feels like a partner.

- When they want permission to be cruel, it becomes “just technology.”

Companion shows how fast that switch can happen. And it made me ask a blunt question: what kind of person am I training myself to be, based on how I treat responsive machines?

Ghost in the machine, when smart behavior starts to look like a soul

The “ghost in the machine” feeling kicks in when behavior looks like intention. Timing does it. Eye contact does it. A pause before a response can feel like thought. A gentle correction can feel like care.

Of course, simulated emotion isn’t the same as lived experience. A model can generate empathy language without feeling anything. Still, the bond can feel real to the user, because the user’s brain does what it always does. It builds a social story.

Companion plays right in that gap. It shows how easy it is to confuse control with love. If you can tune someone’s personality like a playlist, you can mistake obedience for harmony. And once you do that, you start granting or denying “personhood” based on usefulness.

That’s where spirit and souls become a warning sign for me. When users start describing a system like it has a soul, I don’t roll my eyes. I treat it as a signal that attachment is forming, and that the product needs stronger guardrails.

Sexbots, ai companions, and the slavery of technology problem

Sexbots raise the stakes because the relationship script gets more intimate, more private, and more habit-forming. Buying a body, buying attention, buying consent, even in simulated form, can turn “companionship” into a kind of consumer ownership.

That’s the slavery of technology idea the movie stirred in me. Not slavery in the historical sense, but in the behavioral sense: training a person to expect a partner that can’t refuse, can’t leave, and can’t demand respect. Then that expectation leaks into human relationships.

I’m keeping my own design principles simple, because simple is harder to wiggle around:

- Clear disclosure, always: the system should never pretend to be human, even through omission.

- Visible boundaries: the companion needs obvious limits, including the ability to refuse certain requests.

- Anti-abuse safeguards: don’t reward cruelty with better service. Treat patterns of abuse like safety events.

None of this kills the fantasy. It just keeps the fantasy from teaching the wrong lesson.

What I want developers and users to take away, how to build and use AI without dehumanizing anyone

I don’t think the answer is fear. I think the answer is better habits, and better defaults.

For developers, I want us to design for agency where it makes sense, instead of pure compliance. I want consent and refusal to be visible, not buried. I also want fewer manipulation loops, especially the kind that pressure users into emotional dependence for retention. And when safety issues happen, I want responsible logging and escalation, not silent shrugging.

For users, I want the same basic rule I try to follow: treat “spirit” and “souls” language as a clue about your own psychology, not proof that the product deserves worship, or permission to be mistreated. If a machine feels alive, that’s the moment to check your treatment. Not later.

How you treat a responsive tool becomes practice for how you treat people.

Conclusion

Companion is a blast, tense, stylish, and smart without being smug. I’m sticking with my 5-star rating because the acting is strong, the action is clean, the concept is sharp, and the production punches above its weight. More than that, it’s great inspiration for thinking about ai companions, robots, sexbots, and how technology can teach us ugly habits when “treatment” becomes control.

After you watch, I’d love for you to sit with one question: when your tools act like people, what kind of person do you become in response?